Natural Language Processing (NLP) serves as the cornerstone of artificial intelligence, empowering computers to understand, interpret, and generate human language. Its significance lies in its ability to facilitate seamless communication between humans and machines, enabling a wide range of applications across industries. From virtual assistants to sentiment analysis and language translation, NLP enhances user experiences and drives efficiency in textual data tasks. Despite its advancements, NLP faces challenges such as language ambiguity and cultural nuances. However, with ongoing research and technological advancements, the future of NLP holds immense promise in revolutionizing how we interact with technology and harness the power of human language.

In this blog, we will explore all about natural language processing, from its basics to the approaches it follows and the future.

What is NLP?

NLP- Natural language processing is the branch of artificial intelligence (AI) that lets computers or digital devices interpret, manipulate, and comprehend human languages via voices with different accents, intonations, pitches, or texts in real time.

Today, everybody uses NLP-backed artificial intelligence in various forms like virtual assistants (Siri, Alexa), voice in GPS systems, chatbots, etc. NLP combines computer languages with statistics and machine learning models to understand and generate texts or speech.

An NLP model can-

- Translate text or voice input from one language to another.

- Can summarize a large volume of text.

- Respond to your voice commands or texts.

- Generate text graphics according to your requirements.

- Recognize individuals based on their voices.

Why is NLP important?

Different types of human beings and their communities have different kinds of languages, slang, dialects, grammatical irregularities, and tones in their day-to-day conversations. Natural language processing precisely works to make a computer understand these differences accurately and help the AI and ML models interact well with humans.

Companies embed NLP techniques in their machines or AI-powered software systems to streamline their operations and provide a smoother user experience to people. For instance, many e-commerce apps like Amazon, Flipkart, and food delivery apps like Zomato and Uber Eats use their AI customer representatives to help their customers solve their queries in an easier, less time-consuming manner. This also allows the companies to narrow down the query requests so that their human customer representatives can focus only on complex or complicated cases. This not only saves their time but also their resources.

The following are some other examples of NLP being used by companies to automate their tasks:

- Process, analyze, and archive large documents.

- Analyze customer feedback or call center recordings.

- Automated chat assistants.

- Classify and extract texts.

How does NLP work?

There are many natural language processing techniques used to enable computers understand human languages. NLP implementation steps are divided into three phases:

Data Preprocessing

Data preprocessing refers to the initial steps taken to prepare and clean data so that machines can analyze it. It involves cleaning, transforming, and organizing data for further processing. Depending on the needs, it may include removing duplicates, removing missing values, encoding categorical variables, or highlighting texts on which an algorithm can work. Following are some of the ways this can be done:

Tokenization-

Tokenization is a fundamental step in natural language processing or data mining. It refers to breaking down a text into smaller units, words, phrases, or meaningful fragments called Tokens. It allows manipulation of a text to be converted into a form that computers can easily process.

Stop Word Removal-

In this process, common words are removed to put the focus on unique words that offer the most information about the input text.

Lemmatization & Stemming-

Both of these techniques are used to narrow down the words to their root or base form. For example- the word “running would be lemmatized or stemmed to “run.” However, the difference between lemmatization and stemming is that lemmatization uses more computational resources and produces valid and accurate results. On the other hand, stemming is quicker but may produce invalid or inaccurate results.

Part-of-speech Tagging-

A technique where words in a text are assigned labels indicating their grammatical role or part-of-speech like nouns, verbs, adjectives, etc.

Training-

Once the data has been processed, an algorithm is developed. The following are two commonly used natural language processing (NLP) algorithms.

Rule-Based-

Rule-based NLP models rely on pre-defined linguistic rules. The algorithm reads text and applies rules to identify words, their meanings, and grammar to interpret text or speech and generate them.

Machine Learning based-

The machine learning approach uses statistics to train the NLP models. Models are fed the training data like pre-existing language methods, and they can also adjust and improve with their usage or experience. Hence, using machine learning, NLP models can sharpen their rules through repeated processing and learning.

Testing & Deployment-

After the model is trained, it is rigorously tested using various inputs and by acknowledging the ethical concerns to avoid its misuse. Later, the NLP- model is deployed or integrated into existing systems. NLP models use input with texts or speech to predict outcomes or take action based on the requirements.

What are the approaches to natural language processing?

Below are some common approaches to NLP:

Supervised NLP:

Supervised models are trained using a well-labeled data set, where each piece of data is associated with a known output. In the case of NLP, it is used to classify large documents, as the text data is paired with corresponding labels that indicate the correct interpretation.

Unsupervised Learning:

An NLP model uses a statistical language model to interpret the structures or underlying patterns in the training data set. A major example is the auto-completion feature on our digital devices, which suggests relevant words based on the user’s past responses.

Natural Language Understanding (NLU):

As the name says, it is a branch of artificial intelligence that allows the models to understand the meaning of human language. This forms the basis of various applications like virtual assistants, chatbots, etc.

Natural Language Generation (NLG):

NLG generates text or speech in human languages from structured data or other input forms. It converts linguistic or non-linguistic data into grammatically correct natural language. It is used in a wide range of applications like report generation, virtual assistants, content creation, and so on.

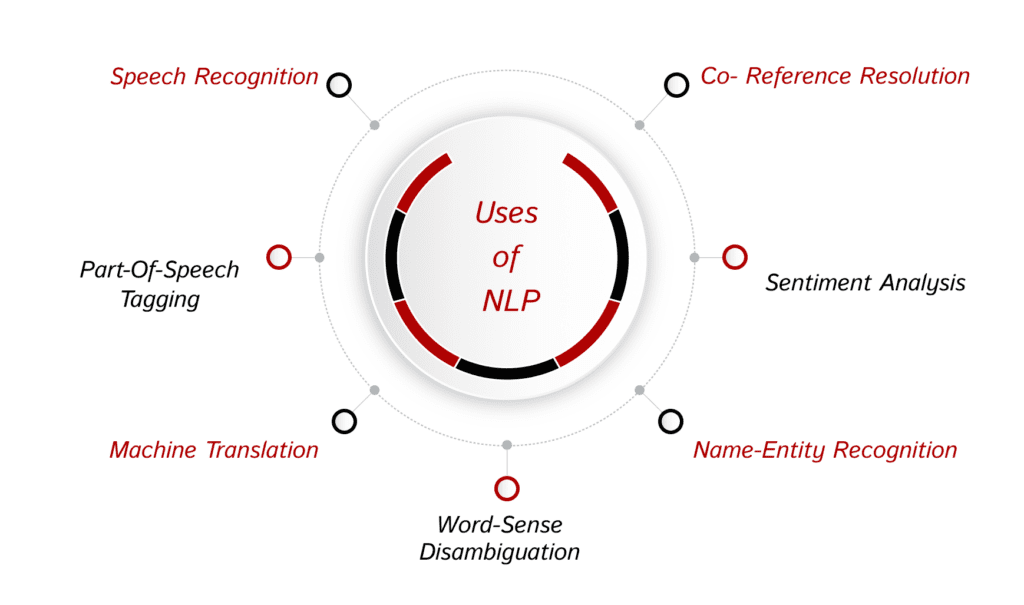

What is NLP used for?

Human texts are filled with ambiguity, and different languages and human beings have different accents and pitches, which creates tons of challenges for machines to understand human language directly. Therefore, NLP is used for sentiment analysis and language translation to machines. It automates tasks, extracts insights from vast amounts of text data, and improves communication between humans and machines. Below are some of the applications of NLP that are widely used in the industries:

Speech Recognition:

Speech recognition, also known as speech-to-text. Speech recognition converts the voice commands or responses to text data. One widely used example of NLP in voice recognition and chatbots is Amazon’s Alexa and Alphabet’s Google Assistant. They use speech recognition to process, understand, and respond to voice commands.

Part-Of-Speech Tagging:

Also referred to as “grammatical tagging,” part-of-speech is a crucial process that tags part of the text, like words or a piece of text, to the part of speech it belongs to. For example, in the sentence “The cat is on the mat.” “Cat” and “mat” are nouns, “The” and “the” are determiners, “is” and “sleeping” are verbs, and “on” is the preposition.

Machine Translation:

Machine Translation is the automated translation of a voice command or text from one language to another via computer algorithms. Machine translation systems use different approaches, including rule-based translation (RBT), statistical machine translation (SMT), and neural machine translation (NMT), to convert text or speech from the source language to the target language.

Word-Sense Disambiguation

Word-sense disambiguation refers to selecting a word’s meaning, which has several meanings depending on the context through semantic analysis or opinion mining. For example, the word “bank” has two different meanings in both of these sentences; “There is a boat at the river bank” and “I deposited my money in the bank.”

Name-Entity Recognition

Name-entity recognition or NER, involves identifying and classifying different entities (like people, places, organizations, dates, or things) in a text. It is used to extract or categorize the entities to specify or provide meaningful information for the downstream NLP tasks. For example, in the sentence, “I visited Paris and enjoyed the Eiffel Tower.” “Paris” is a GPE- Geo-Political Entity, and the “Eiffel Tower” is an organization.

Sentiment analysis

Sentiment analysis refers to identifying subjective qualities like emotions, attitude, mood, sarcasm, confusion, and suspicion within large amounts of text. It is widely used on social media for various purposes like customer feedback analysis, brand reputation management, market research, etc.

Co-reference resolution:

Co-reference resolution means identifying or linking the words or phrases in a text that refer to the same entity or concept. Identifying pronouns and noun phrases that refer to previously mentioned entities is important for understanding text.

Challenges of Natural Language Processing

The challenges in natural language processing stem from the dynamic and often ambiguous nature of human language, such as the following:

- Precision:

Computers have always interacted with humans using low to high-level computer languages, which are less confusing and precise. Looking at the other side, human language is full of ambiguity, and different kinds of human beings have different tones, accents, slang, dialects, voices, and pitches. Thus, having to deal with the same and building apt NLP models is challenging.

- The tone of voice and inflection:

NLP still needs to work on recognizing the tones and the state of affairs of human speech. For instance, it doesn’t pick sarcasm easily, and the semantic analysis is still challenging. These skills usually require understanding the meaning behind words and the context of the conversation. Hence, the abstract use of language is tricky and complex for programs to understand.

- Evolving use of language:

Another challenge that natural language processing (NLP) models encounter is the fact that human language is constantly changing. With so many rules and fluidity in language and none written on the rock, it can be difficult to ensure that these changes are accurately reflected in NLP models, as there is no set standard for updating them.

- Bias:

It is important to recognize that NLP systems may exhibit bias when they learn from training data that contains biases. This can pose challenges in various fields, such as healthcare and employment, where biased decision-making can negatively affect individuals.

Advancements in the field of NLP

NLP is a revolutionary field, and over the years, it has witnessed significant advancements with breakthroughs in deep learning and transformer-based models like GPT-3 and Gemini. Let’s discuss some key advancements in this field:

Transformer-Based Models:

They have reduced the problems associated with traditional recurrent neural networks(RNN) and convolutional neural networks(CNN) by introducing self-mechanisms. Transformer architecture allows models to process large amounts of data simultaneously. Thus, it enhances the scalability and enables efficient and accurate natural language understanding. The following are some advanced examples of Transformer NLP:

GPT-3:

OpenAI’s ChatGPT is the largest language model (LLM), with 175 billion parameters that dominate the world of natural language processing. With its invention, NLP-backed AI models have become widely accessible, and people now use ChatGPT daily in their personal and professional lives. Its groundbreaking text generation ability has various applications like chatbots, creative writing, and content generation.

Google Gemini:

Google Gemini is another pre-trained language model similar to ChatGPT. It natively understands human language, operates, and combines other forms of information like images, audio, video, and code. Google Gemini comes in three versions: ultra, pro, and nano. All these versions have differences in the number of parameters and capabilities for handling complex queries.

Transfer Learning:

It means learning from past data, tasks, or experiences. This approach has really helped in boosting the use of NLP and has increased its accessibility to a broader audience, including developers with limited knowledge of NLP techniques. Using transfer learning, models can be trained from scratch using fewer resources, such as less data and time.

Multimodal NLP:

Earlier, NLP was limited to text data, but recent advancements have expanded its domains to include visual and voice data. This feature extends its usage in many other applications, including image captioning, visual question-answering, and speech-to-text transcription.

Future of Natural Language Processing

In the future, we can expect more accurate language understanding and enable machines to comprehend human language nuances and context with greater precision. This will pave the way for developing more sophisticated conversational AI systems capable of engaging in natural, context-aware conversations. Future NLP models will likely offer improved multilingual and cross-lingual capabilities, facilitating seamless communication across diverse linguistic landscapes. Personalization and contextualization will also be key focuses as NLP evolves to deliver tailored responses based on individual preferences and situational context. As these advancements continue, ethical considerations will play an increasingly significant role, ensuring that NLP technologies are developed and deployed in a fair, unbiased manner. Overall, the future of NLP promises to empower users with more intuitive, efficient, and human-like interactions with technology.

Looking for the best AI development, integration, and consulting company? Look no further because Build Future AI has it all!